Why Sovereign AI Is the Most Important Infrastructure Problem of Our Time

They’re Taking It From You. You Just Haven’t Noticed Yet.

Your data is being taken from you.

Every query you send to a centralized AI platform — every business problem you think out loud, every medical question you ask, every strategy you test, every document you summarize — is being collected, retained, and used to train systems you will never own, governed by people you will never elect, for profit you will never see.

Your intellectual property is being taken from you.

The proprietary knowledge embedded in how your company asks questions, structures problems, and interprets answers is signal. It is training data. The model gets smarter because of you. The platform captures that value. You get a monthly subscription bill.

Your agency is being taken from you.

When the AI that runs your business, tutors your children, advises your doctors, and shapes your news feed is owned by a corporation whose incentives are not yours — you don’t have AI. You have a dependency. A landlord relationship dressed up as a productivity tool.

And machine learning needs to be monetized — but not by them. By you.

This is the founding conviction behind everything we’ve built at Gaia. Not just a technical disagreement about infrastructure architecture. A fundamental belief that the intelligence layer of the internet belongs to the people who use it, contribute to it, and depend on it — not to a handful of companies in San Francisco and Seattle who got there first.

I want to explain exactly why this matters, what sovereign AI actually means, and why the window to get the architecture right is closing faster than most people realize.

The Five Ways Centralized AI Fails You

The problems with centralized AI are not edge cases or theoretical concerns. They are structural. They are happening now. And they compound.

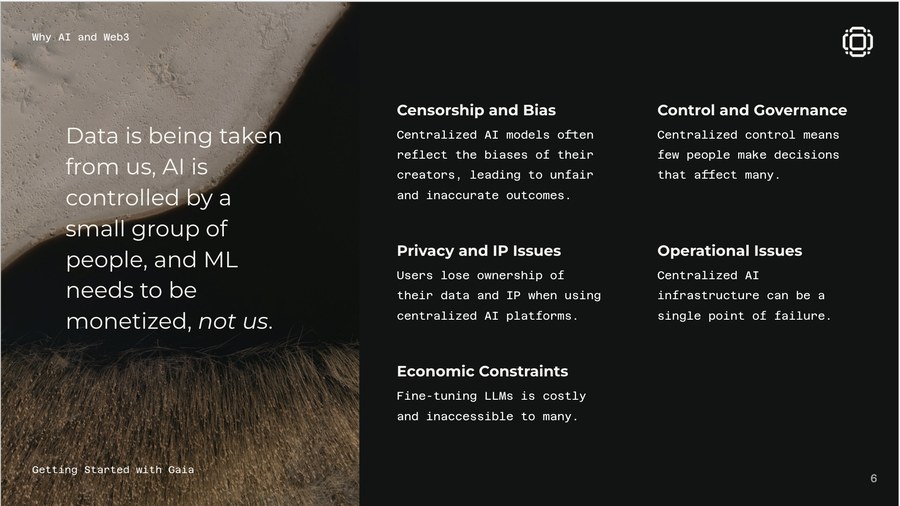

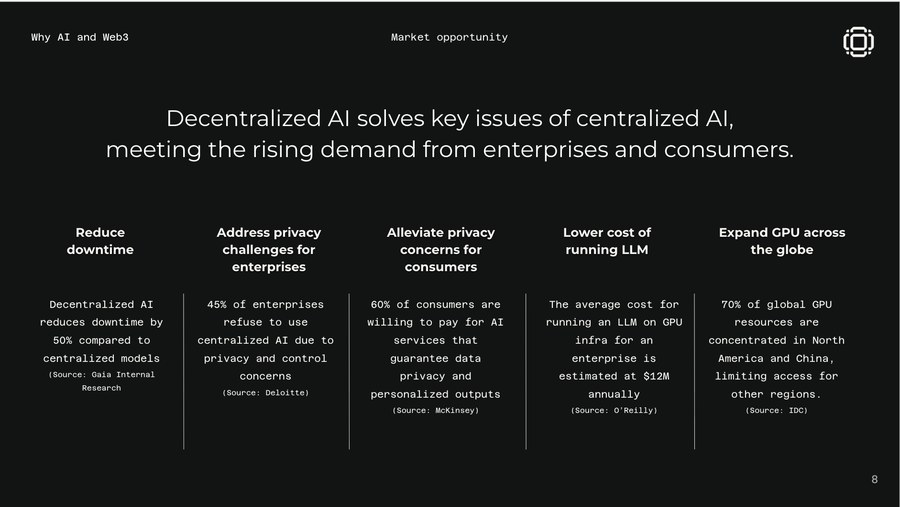

Censorship and Bias. Centralized AI models reflect the worldview of the people who built them — their cultural assumptions, their political priors, their definition of what counts as truth. A model trained by a team in one city, on data scraped from one dominant culture, that then gets deployed as the reasoning layer for global healthcare, finance, and governance — that model doesn’t just reflect bias. It exports it, at infrastructure scale, to every place it touches. And because the training process is opaque, you can rarely trace where the bias came from or how to correct it. You just have to accept the outputs.

Control and Governance. A small group of people makes decisions that affect billions. They decide what the model will and won’t do. They decide what topics are too sensitive to engage with. They decide when to deprecate a model that your business is built on. They decide what the terms of service say and when they change. You agreed to those terms — probably without reading them — and you have no meaningful recourse when the decisions don’t go your way. This is not governance. It is benevolent dictatorship, and the benevolent part is not guaranteed.

Privacy and IP. When your data enters a centralized system, you lose ownership of it in any meaningful sense. You may have legal protections in theory. In practice, the data is processed, retained, and used in ways you cannot audit. Your proprietary research, your customer interactions, your business strategy, your medical history — all of it becomes input to a training pipeline you have no visibility into. The platform’s privacy policy is not your friend. It is a legal instrument designed to protect the platform.

Operational Issues. Centralized AI infrastructure is a single point of failure. When the API goes down — and it does — everything that depends on it goes down with it. When pricing changes overnight, your unit economics break without warning. When a model is updated or deprecated, your carefully tuned application behavior changes in ways you didn’t choose. You have built your business on someone else’s infrastructure, and they can change the foundation without asking.

Economic Constraints. Fine-tuning large language models is extraordinarily expensive. Running inference at scale on centralized cloud infrastructure is extraordinarily expensive. The economics of AI right now are designed to keep the most powerful capabilities in the hands of the largest organizations — and to keep everyone else dependent on APIs that extract value from every call. The average enterprise spends millions annually just to access AI capabilities that should, by now, be infrastructure-level commodities.

These are not five separate problems. They are five symptoms of the same root cause: the intelligence layer of the internet was built for extraction, not participation.

What Sovereign AI Actually Means

The word “sovereignty” is doing a lot of work in this industry right now, and it’s worth being precise.

Sovereign AI is not about nationalism. It is not about building a separate internet for every country, or retreating into isolated private networks that can’t talk to each other.

Sovereign AI means three things:

You own the inference. The computation that turns your query into an answer happens on infrastructure you control — your device, your server, your node, or a network of nodes you trust. You are not dependent on a third party’s availability, pricing, or goodwill.

You own the knowledge. The data, context, fine-tuning, and intellectual property that makes an AI system yours — your domain expertise, your proprietary research, your institutional knowledge — lives in infrastructure you control. It is not extracted or trained upon by a platform whose interests may diverge from yours.

You participate in governance. The rules that determine how the AI behaves — what it will and won’t do, who can access it, how it evolves — are not set unilaterally by a corporate board in a closed room. They are determined through open, participatory mechanisms that you have standing in.

This is not a utopian vision. It is an engineering specification. And it is exactly what we are building.

The Nation-State Parallel Nobody Wants to Hear

The closest modern parallel is telecommunications.

In the early internet, there was a real question about whether a handful of companies would own the pipes that carried all global communication. The answer was a combination of open standards, competitive infrastructure, and hard-fought regulatory decisions that ensured no single entity became the chokepoint.

We failed to apply those lessons to social media. The result was algorithmic manipulation, surveillance capitalism, and the outsourcing of public discourse to private platforms accountable to no one but their advertisers.

We failed again with cloud computing. The result is that three companies — Amazon, Microsoft, Google — control the infrastructure that runs most of the world’s digital economy. They are landlords. We are all tenants.

We are on the verge of failing a third time with AI. And this failure is categorically worse — because what’s being centralized is not storage, not compute, not distribution. It’s reasoning. It’s judgment. It’s the capacity to make sense of the world.

Governments are beginning to understand this. The EU AI Act, India’s IndiaAI mission, Southeast Asia’s emerging data sovereignty frameworks — all reflect a growing recognition that a nation’s AI infrastructure is a matter of strategic security, not just economic policy. Countries that outsource their intelligence layer to foreign corporations don’t just accept vendor risk. They accept a form of cognitive dependency that makes everything from national security to public health contingent on the operational decisions of a private entity in a foreign jurisdiction.

But this cannot be solved at the nation-state level alone. The architecture has to be decentralized by design — not because any government mandates it, but because a distributed network is inherently more resilient, more censorship-resistant, and more equitable than any centralized alternative, regardless of who’s running it.

The Open-Source Argument Is Necessary But Not Sufficient

Here is the counterargument I hear most often: The open-source AI movement is already solving this. The weights are downloadable. Anyone can run Llama. Problem solved.

I have enormous respect for open-source AI. It is a critical part of the answer. But it is not the whole answer — and the distinction matters.

Open-source models give you access to the weights. They do not give you sovereign AI capability. The infrastructure required to run those models at production scale — the compute, the deployment tooling, the fine-tuning pipelines, the operational knowledge, the update mechanisms, the governance — remains deeply centralized. Hugging Face is a platform. The models may be open; the ecosystem around them is not.

More importantly: the vast majority of organizations that need sovereign AI capability cannot build it from scratch. A hospital system cannot spin up its own inference infrastructure. A university cannot maintain its own model deployment pipeline. A small business cannot hire the engineering team required to run AI at scale on its own hardware.

The gap between “the weights are available” and “I have sovereign AI capability” is enormous. Bridging that gap is the actual infrastructure problem. And it’s the one we’re solving at Gaia.

The open-source models are the content layer. We are building the protocol layer — the network, the coordination mechanisms, the economic incentives that make decentralized AI actually work for organizations that don’t have a thousand engineers.

The Economic Case Is As Strong As the Ethical One

The sovereign AI conversation is often framed in moral terms — rights, privacy, fairness. Those arguments matter. But the economic case is just as compelling.

The centralized AI model is designed to extract value. You provide the data. You pay the API costs. The training signal — the feedback from your usage that makes the model smarter — accrues to the platform. You are the input. They are the beneficiary.

The decentralized AI model is designed to distribute value. When you run a Gaia node, you contribute compute and earn when your AI services are used. When you deploy domain expertise into the network, you earn when your knowledge is called upon. When you validate outputs, you earn for the service of maintaining trust. The economic model is not extraction — it is participation.

Think of it as the difference between sharecropping and land ownership. In the centralized AI model, you farm the land and pay the landlord. In the sovereign AI model, you own the land. You decide what grows on it. You keep the harvest.

We have over 700,000 active nodes on the Gaia network today. Those are 700,000 participants who chose to be infrastructure, not just consumers — running AI services on their own hardware, deploying their own domain knowledge, earning from their contributions, collectively powering a network that has served more than 17 trillion inferences. That is not a pilot program. That is an economy.

What We’re Building and Why Now

At Gaia, we believe every layer of the AI stack — from request validation to agent deployment — should be economically secured and community governed.

Our network runs across an open mesh of independently operated nodes. Organizations can deploy AI agents on-premise, on-device, or across our decentralized network without surrendering their data or their economics to a centralized platform. Our Active Validation Service ensures outputs are verifiable — you don’t just trust the model, you can verify what it did. Our Domain architecture gives organizations sovereign AI environments with their own policies, their own identity systems, their own metering.

GAIA, our native token, is the coordination layer that makes all of this work at scale — aligning incentives across verifiers, miners, domain operators, and contributors without requiring anyone to trust a central authority. Cryptoeconomic design is purpose-built for exactly this kind of decentralized coordination problem, and we are using it exactly that way.

This is not a research project. It is a production network. We are proving — in production, at scale — that you do not have to choose between AI capability and AI sovereignty.

The Window Is Closing

Here is the hard truth: the window to get this architecture right is closing.

Every day that passes, more of the world’s AI infrastructure gets locked into centralized platforms. The data pipelines deepen. The fine-tuned models embed. The enterprise relationships calcify. The developer ecosystems consolidate. The switching costs compound. And the regulatory capture expands in the direction of whoever’s already in the room.

We have a narrow window — I believe it is two to four years — in which the architectural choices we make collectively will determine whether AI develops as an open, participatory infrastructure or as a set of monopoly utilities controlled by a handful of corporations.

This is not inevitable. The internet was almost a much more closed system. The web was almost proprietary. Open standards won — not because they were destined to, but because people chose to build them and fight for them.

Sovereign AI is that choice. It is the decision to build intelligence infrastructure that nobody controls but everyone can use. Infrastructure that runs where your data lives. Infrastructure governed by rules you helped write. Infrastructure that generates value for the people who contribute to it — not just the platforms that sit above it.

Your data should not be taken from you. Your intelligence should not be owned by someone else. Your AI should work for you.

That is what we are building. And we believe the builders, enterprises, developers, and communities who make this choice alongside us are the ones who will own the next era of intelligence — not just use it.

Matt Wright is the CEO and Co-Founder of Gaia, the decentralized AI platform building sovereign intelligence infrastructure for the agentic economy. Gaia’s network spans over 700,000 active nodes across thousands of domains globally.